Biomedical engineer Parisa Rashidi and her team collaborated with the UF College of Medicine on an interdisciplinary project, using pervasive sensing technology and AI to perform autonomous and highly detailed monitoring in the Intensive Care Unit (ICU).

Every year, more than 5.7 million adults are admitted to intensive care units (ICU) in the United States, costing the health care system more than $67 billion per year. A wealth of information is recorded on each patient in the ICU. Their electronic health records include high-resolution physiological signals, various laboratory tests, and a detailed medical history.

Today, nurses build an index of a patient’s status in terms of function and reaction to environmental factors in the ICU by periodically observing the patient and asking a battery of questions. The process is time-consuming and often incomplete due to the patient’s condition. Two researchers at the University of Florida are looking toward tomorrow, where indices may be constructed from real-time autonomous observations and analyses of data based on artificial intelligence (AI).

Parisa Rashidi, Ph.D., an assistant professor in the J. Crayton Pruitt Family Department of Biomedical Engineering at the Herbert Wertheim College of Engineering, and Dr. Azra Bihorac, M.D., the R. Glenn Davis professor of medicine in the UF College of Medicine, are collaborating to improve the assessment process for hospital staff and patients. They recently completed a pilot study, in which they examined the feasibility of using pervasive sensing technology and AI for autonomous and highly detailed monitoring in the Intensive Care Unit (ICU).

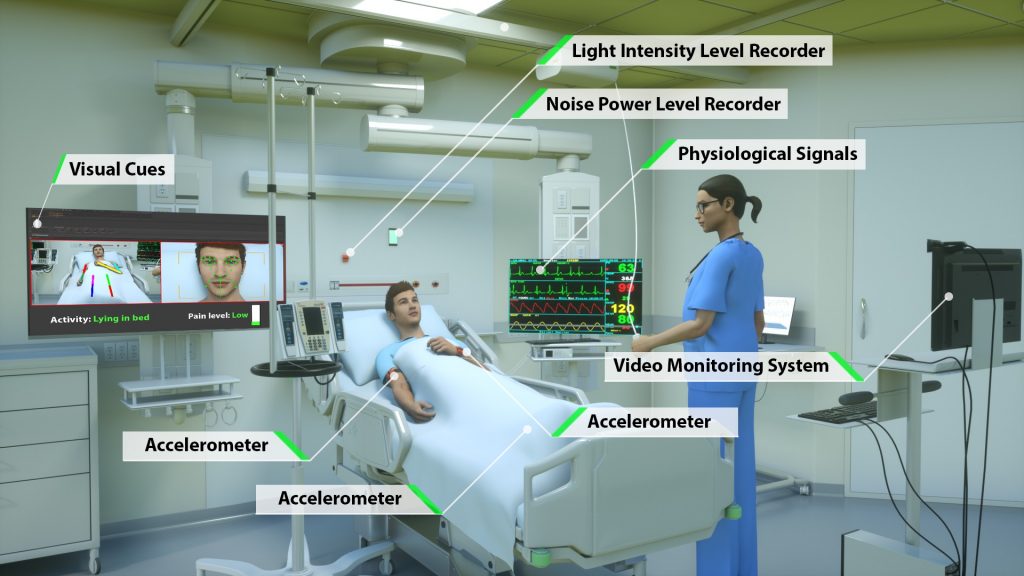

Rashidi, Bihorac and their research team performed the study on delirious patients and controls in a hospital critical care unit. They employed wearable sensors, light and sound sensors, and a camera to collect data on patients and their environment. Their results showed that granular and autonomous monitoring of critically ill patients and their environment is feasible using a noninvasive system, and they demonstrated its potential for observing and describing critical care patients’ status and their surrounding environmental factors that contribute to sleep disruption and ICU delirium, such as loud background noise, intense room light, and excessive rest-time visits.

Image from Davoudi, Anis, et al. “Intelligent ICU for Autonomous Patient Monitoring Using Pervasive Sensing and Deep Learning.” Nature, Scientific reports 9 (2019).This work is licensed under the Creative Commons Attribution 4.0 International License. Image created by Ella Maru Studio.

During the study, the researchers collected the data and subsequently analyzed it using algorithms developed by Dr. Rashidi and her engineering students. “AI technology could assist not only in administering repetitive patient assessments in real-time, but also in integrating and interpreting these data sources with EHR data, thus potentially enabling more timely and targeted medical interventions,” Rashidi said. “We were (also) able to determine that facial expressions, functional status involving extremity movement and postures, and environmental factors, including visitation frequency, light and sound pressure levels at night, were significantly different between the delirious and non-delirious patients,” Dr. Bihorac added.

The results of this research project were recently published in Nature’s “Scientific Reports.” The paper characterizes the seven different sensing and monitoring mechanisms that were used in the research, which resulted in the most wide-ranging study of autonomous critical care monitoring to date.

The co-location of medicine and engineering colleges on a single campus at the University of Florida has continued to provide an enhanced setting for researchers to perform interdisciplinary studies at an accelerated rate. “The opportunity for our engineering faculty to collaborate in multidisciplinary studies such as this one enables us to develop and test innovative solutions much more quickly and comprehensively,” said Dr. Forrest Masters, Associate Dean for Research and Facilities at the Herbert Wertheim College of Engineering. “The deep relationship our biomedical engineering program has established with our College of Medicine is evidenced by the remarkable work that was done in this study.”

For future work, the researchers and their team hope to incorporate real-time analysis of the data from the sensors and be able to provide immediate feedback directly to physicians and nurses as indicators of patient status and even point to possible treatment outcomes.